Organizations are looking for ways to modernize and migrate their applications to the cloud. Amazon Web Services (AWS) offers a compelling set of infrastructure, automation, security and compliance services to provide a ready-made on-ramp to cloud adoption. However, the dilemma organizations face is centered around the optimal cloud migration pathway – lift & shift or rearchitect? The principals at stackArmor have successfully performed over 100 application migration and modernization programs and projects since 2009. Recovery.gov was the first system migrated to the AWS cloud and to receive the Authority-To-Operate (ATO). Since then we have supported a number of major cloud modernization and migration initiatives at both US Federal, DOD, Public sector and Commercial organizations.

We recently completed a project for the CIO of a major public sector organization serving nearly 500,000 customers with a portfolio of 100 applications consisting of custom applications, COTS and SaaS. The CIO laid out a modernization strategy centered on adopting cloud computing and modernizing its IT infrastructure to improve the reliability of its digital services and reduce costs. Specific requirements included:

- Portability using containers

- Using PaaS and Microservices based architectures to reduce costs and improve efficiency

- Provide better reliability and performance through a resilient cloud infrastructure

stackArmor executed on this vision from a cold start – the customer had never experienced containers, how they were built, deployed and operated. Using stackArmor’s Agile Cloud Transformation (ACT) methodology and ready-built landing zones, within 6 weeks we delivered a functioning AWS based hosting enclave with VPN connectivity running a containerized web application.

Specific activities performed as part of the project included:

- Setup a AWS Account and VPC

- Configure container and CI/CD components

- Establish VPN secure connectivity to the legacy data center for Oracle Data Services

- Create Docker images of the web application

- Demonstrate the end to end hosting and performance of the application

- Additionally, demonstrate the use of AWS DMS towards facilitating the migration from Oracle RDMBS to an open source database like PostGreSQL.

The specific steps in executing the containerization project are described below.

1.Setup AWS Account and VPC

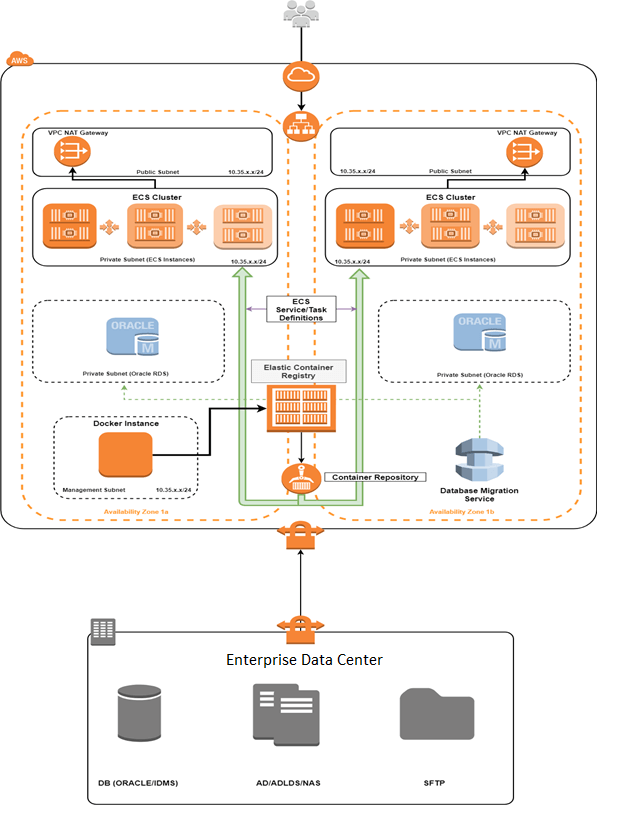

The AWS VPC was configured in the US East Northern Virginia Region with a defined CIDR block. Subnets were created within the VPC have a /24 network prefix. There were two public subnets and two private subnets deployed across two availability zones for the high availability of the web application. The public subnets contained two VPC NAT Gateways used by all instances on the the private subnets to make outbound connections.

One management subnet was reserved for instances used as development and configuration servers. Three subnets were deployed across 3 availability zones for the high availability of Amazon Relational Database Service instances (Amazon RDS). Using AWS DMS, the Oracle Databases can be migrated from the enterprise data center to RDS instances residing in these subnets. A VPN tunnel was established between the enterprise data center based Cisco Gateway appliance and the VPC’s Virtual Private Gateway.

2. Containerization and Deployment

The first step to containerization and deployment planning is to determine the appropriate base image. The web application was currently running on IBM Websphere 8.5.5 network deployment and used IBM HTTP Server 8.5.5 as a a reverse proxy within the data center. Choosing an appropriate base Docker image to use for the web application based on its current architecture was the first step. It is important to consider both compatibility and security when determining whether to build an image from scratch or selecting a pre-built image on docker hub. The best option for this project was to use a pre-built image created by IBM with websphere already installed. IBM webspere Docker images are well documented with all component’s made available for customization. Additionally, the images have advanced build phase configuration functionality and we can rely on the container’s security considering no third parties were involved in its development. The Docker containers used for the web application were ibmcom/websphere-traditional 8.5.5.14 base images pulled from IBM’s public docker hub.

3. Building and Configuring the Docker Image

Using an EC2 instance purposed as a Docker sandbox, all application dependencies were copied into the container, loaded into Websphere with the necessary configurations and committed as a web application image. This EC2 instance can also used for the automated build phase configuration of the web application Websphere image using jython and shell scripts. Due to the relative simplicity of configuration steps and amount of dependencies, we chose to manually configure the image for this application. The disadvantage to this approach is that while configuration changes remain saved in committed images, individual steps to reproduce the image are lost. For data connectivity, the web application used connection strings applied in Webshere to communicate with the Oracle databases, IDMS databases and Active Directory in the data center via the VPN Tunnel.

4. Deploying Web Application with AWS Services

After creating and testing the container on the sandbox instance, the web application image was pushed to a repository in the AWS Elastic Container Registry ECR. From the repository, the AWS Elastic Container Service (ECS) was used to configure services/task definitions that specify how the web application containers are deployed and scaled on ECS instances in the private subnets. Once the ECS instances were running the web application containers, external users connected and authenticated to the application through an Application Elastic Load Balancer (ELB). The following diagram provides an overview of the AWS architecture.

Are you interested in modernization your applications and migrate to the cloud? We are experts in application assessments, application rationalization and providing ready-made migration templates to help accelerate your cloud journey. Please fill out the contact us form.