By: Matthew Venne, Senior Solutions Director

It is no secret that containers make up a growing proportion of production workloads. The ability to package up applications into discrete components is certainly nothing new. Package management tools like RPM have been doing this for a while. However, the ability to orchestrate the lifecycle of these components and essentially run them anywhere has revolutionized the global Software Development Life-cycle (SDLC), and at the same time, introduced challenges for auditors and risk assessments in regulated markets.

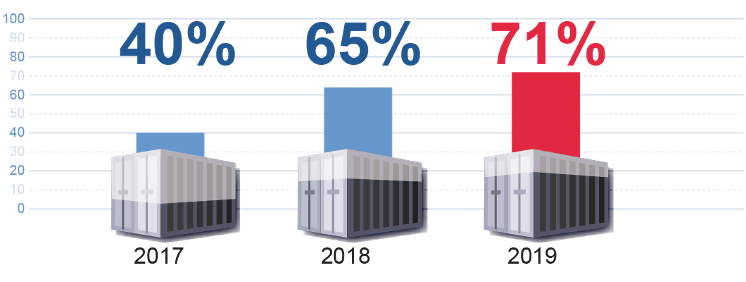

Companies Running Majority of Applications in Containers:

To lend some clarity to auditors, the FedRAMP PMO issued their initial container security guidance in March 2021.

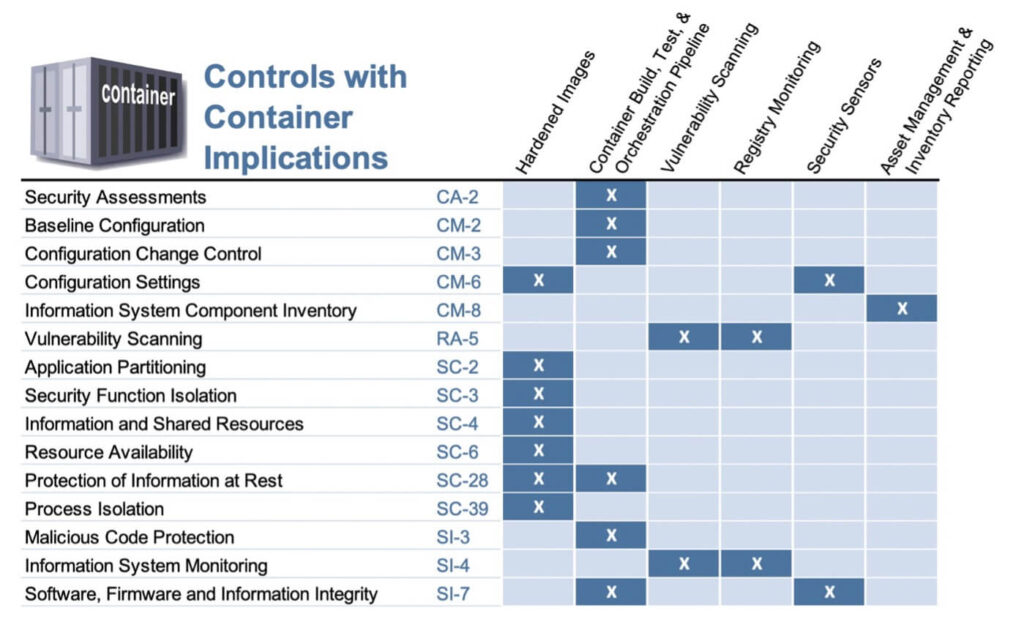

Controls with Container Implications for FedRAMP Compliance

The following table, according to guidance published by the FedRAMP PMO, summarizes the controls that must be considered for computing environments that leverage containers.

Note that the PMO is not introducing new requirements for containerized workloads, but their new guidance offers merely an extrapolation of the existing FedRAMP requirements and how they apply to containerized workloads.

Scanning Requirements for Systems Using Containers

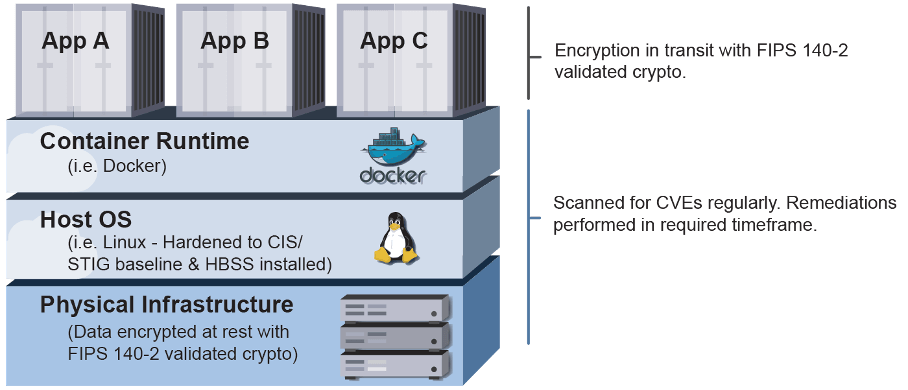

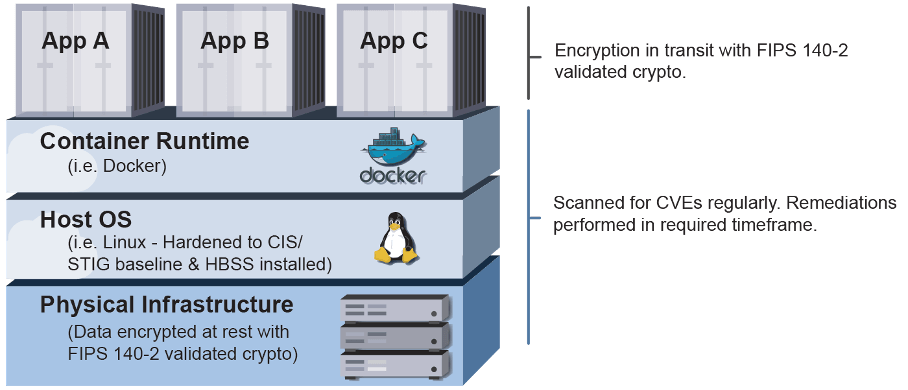

When using containers, Cloud Service Providers (CSPs) are NOT precluded from adhering to host-based security guidelines and FIPS 140-2 (soon 140-3) encryption requirements. Containers, after all, are just a mechanism for running an application.

Strictly stated, CSPs are responsible for the applying best security practices to the hosts (i.e., worker nodes) and the containers running on them. If you are hosting your workloads on a public cloud such as AWS, you will inherit the security and maintenance of the physical infrastructure since those are covered by the Shared Responsibility model.

Hardened Images

| Applicable Controls: | CM-6, SC-2, SC-3, SC-4, SC-6, SC-28, and SC-39 |

Just as CSPs are required to harden the OS of the VMs, CSPs must harden the container images themselves. If using an OS base image for containers, then the container image must be hardened to the CIS benchmark f that particular OS. The challenge here is that many tools being used to monitor CIS and STIG Hardening Compliance of the VM, like Tenable SC, do not have the ability to do this on container images. It requires special tools with the ability to peer inside the container image and import custom security checks – based off of CIS/STIG benchmarks so the security tool can determine if the image is compliant.

If using a “distro-less” image – an image that ONLY includes your application and its dependencies or an image built entirely from scratch, it is incumbent on the CSP to work with their Third Party Assessment Organization (3PAO) to develop a custom benchmark and demonstrate that their images are sufficiently hardened.

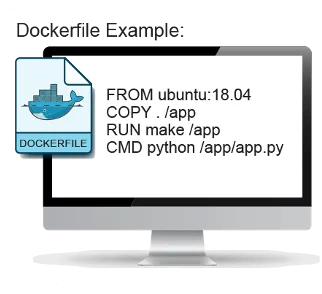

Point of clarity: Why do some containers have a base image like Ubuntu or Debian?

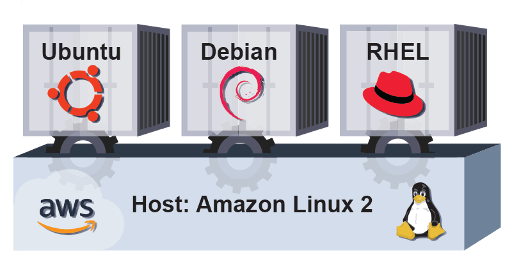

One of the most common points of confusion with containers is understanding what is meant by a base image. One frequently asked question is: “Can I run a Ubuntu container image on a RHEL worker node?”. (The answer is yes.) To help clarify, let’s take a step back to reframe the understanding.

If running an app on an Ubuntu (or other) VM and installing dependencies, one of the fastest ways to containerize that app is to install the dependencies with apt-get since build scripts are already written and the versions of any dependencies will be easily translatable. Often, developers will use a container image that mirrors their non-containerized app’s host VM, like Ubuntu, providing a familiar file system structure and package management tools.

This removes the pain points of containerization and also explains why there are often differences in the container image OS and the host OS. However, these “OS” based images usually introduce unnecessary components to make the actual application work – e.g. your application may not actually need bash or curl to actually run but those components will be included in an OS image. This is why when using an OS based container image in FedRAMP, they must be hardened to their specific CIS or STIG benchmark.

**All federal workloads require the usage of FIPS 140-2 encryption at all levels and containers are no different. The main issue most CSPs have when going for a Federal ATO is that they did not build their application using FIPS 140-2 validated cryptographic modules – which is most commonly problematic for encryption in transit. The route most go with is to implement a service mesh that meets these requirements – they typically integrate seamlessly and add other features such as more fine-grained control of service-to-service routing and improved observability. However, service meshes do introduce additional overhead since they require sidecar proxies on each container that is running. The other option os rebuilding their containers from the ground up to use proper cryptographic. This is often avoided for being too labor intensive and/or the cryptographic module that was originally used may not have a valid FIPS-compliant option. If CSP do choose to build the FIPS crypto into the actual containers, a good place to start would be this github repository. There is an interesting undertaking with Cilium to incorporate a sidecar-less service mesh; once that incorporates FIPS validated crypto that may be an attractive option.

Container Build, Test, and Orchestration Pipeline

| Applicable Controls: | CA-2, CM-2, CM-3, SC-28, SI-3, and SI-7 |

This is an interesting requirement because it makes having a Continuous Integration/ Continuous Delivery (CI/CD) pipeline for containers a strict requirement for FedRAMP. This is required even if that pipeline and the test environment reside OUTSIDE of the system boundary. This is for good reason, as it would be untenable to manage a catalog of container images without relying heavily on automated builds and testing. Before building a pipeline, it is important to understand the hierarchy of components and how they interact without each other. Here is a link to an excellent blog by AWS that covers that hierarchy.

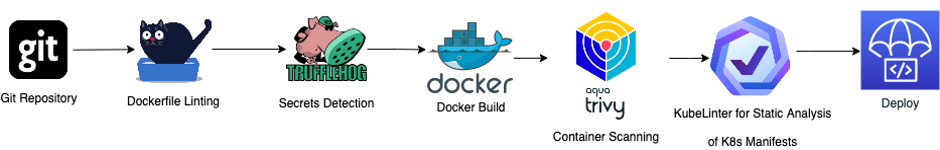

Example CI/CD Pipeline Build for Compliance:

A CI/CD pipeline might include the following elements (note that there are multiple tools that may be appropriate for each phase): GIT Repository – Uses a version control system to stores versions of code and track and save all changes made to a project Linting the Dockerfile – Analyzes and helps build best practice Docker imagesEnsures secure builds (e.g., ensuring the last user in Dockerfile is not root)Ensures the base image has a 3PAO approved baseline (or approved container registry)Secrets Detection – Ensures secrets (passwords, API Keys) are not embedded in the image using tools like TruffleHogDocker Build – Builds the container imageContainer Scanning – Scans the latest build for CVEsStatic Container Analysis – Scans Kubernetes manifests Deployment – Code deployed through a carefully constructed CI/CD pipeline should not introduce unnecessary risk associated with the pipeline itself

Ultimately the FedRAMP PMO is looking for a pipeline to have mechanisms in place to prevent unauthorized (i.e., insecure) images from being deployed into production.

Vulnerability Scanning for Container Images and Registry Monitoring

| Applicable Controls: | RA-5, SI-4 |

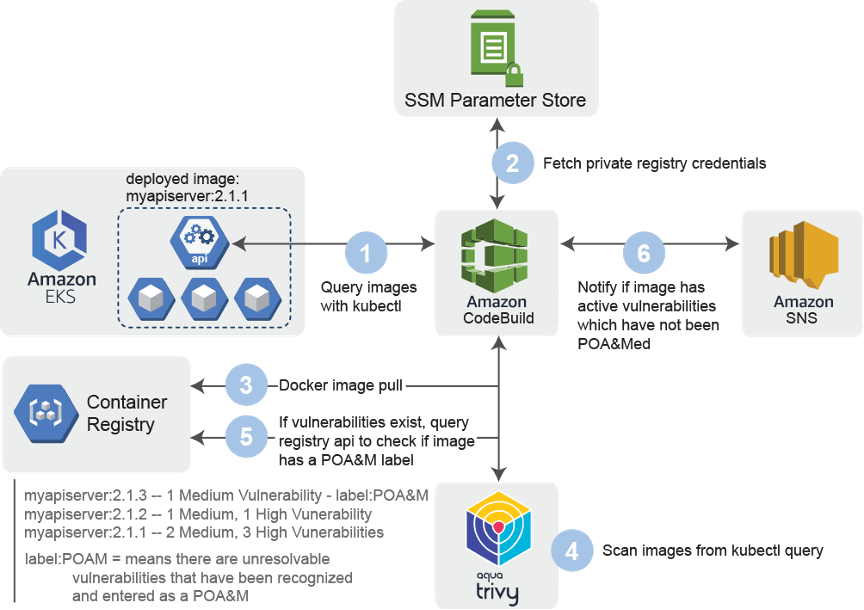

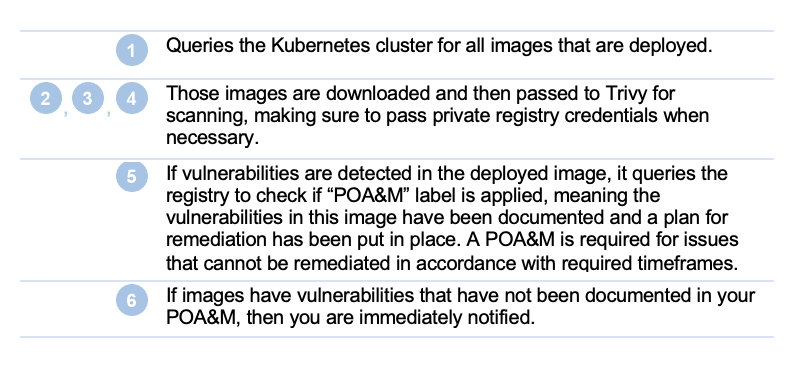

Vulnerability scanning and registry monitoring are both required and closely related. Just as all VMs must be scanned for vulnerabilities every 30 days, so must all container images. Any vulnerabilities found in those containers must be either remediated in accordance with their severity or given a Plan of Action & Milestone (POA&M), exactly as any other vulnerabilities would be handled.

There are many tools capable of scanning container images for CVEs such as Anchore, Trivy, Clair, Snyk, Sysdig, and Tenable IO. These tools are often integrated directly into the registry or done via the aforementioned CI/CD Pipeline. Some registries like AWS ECR natively support scanning of all images pushed to it; while other registries like Harbor allow hooks to be published that integrate with container scanners – automating scanning of all images in the registry. However, there are often differences between what is in the container registry and what is actually deployed in production.

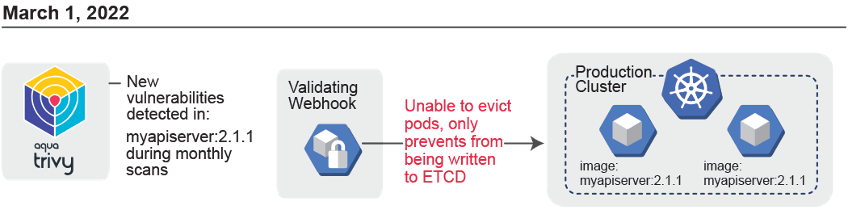

This is where Registry Monitoring comes into play. The FedRAMP PMO is looking for evidence of a mechanism to monitor and alert when container images are deployed in production that have unremediated or un-POA&Med vulnerabilities.

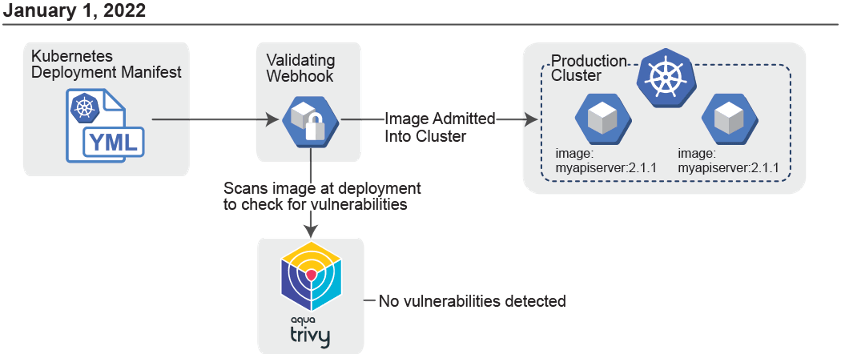

If using Kubernetes, Admission Controllers immediately come to mind – i.e. ImagePolicyWebhooks** (requires access to API server thus not supported in managed Kubernetes services such as EKS), ValidatingWebhooks, or OPA Gatekeeper. They are great options but may not be sufficient though as they only prevent vulnerable containers from being admitted into the cluster; they have no ability to act on vulnerabilities that are discovered AFTER runtime.

**AWS does offer a solution to simulate an ImagePolicyWebhook in EKS by down your ECR registries using ECR’s native vulnerability scanning. This helps close the gap on preventing vulnerable images from being deployed. However, just like with true ImagePolicyWebhooks there are still some gaps.

- It only protects images in ECR

- It only prevents image pulls. If pods have already been deployed, you will need to force a pull of images for the deny policy to be enforced:

- Scaling the deployment down and back up.

- All pods would be required to have an ImagePullPolicy of Always.

- Do a refresh of all worker nodes.

- Scaling the deployment down and back up.

- If you block your cluster from pulling certain images, your application may break if that image is blocked is critical to functionality of your application. While not necessarily a deal breaker, it does necessitate a healthy discussion on which risks are acceptable by all the stakeholders involved.

The following diagram provides a high-level example on how a CSP might address Registry Monitoring requirements. It would be run independently**, outside of the CI/CD pipeline, at least 1x every month as part of standard continuous monitoring.

Example Registry Monitoring Scenario:

**Before implementing this in production, make sure to discuss the solution and the requirements with a 3PAO or Sponsoring Agency to ensure that the solution is meeting their risk-based requirements.

There are SaaS products like Palo Alto PrismaCloud, SysDig, Qualys that offer similar functionality, but any external system must meet their own FedRAMP requirements or be implemented in a manner that is acceptable to both the 3PAO and the Agency users. At the time of writing, PrismaCloud and Qualys were both authorized at the FedRAMP Moderate level.

Security Sensors

| Applicable Controls: | SI-7, CM-6 |

Security Sensors is a generic term used for any technology that is deployed alongside your container workloads to improve the security posture. One could make the argument that the above example in Registry Monitoring could be considered a Security Sensor as well.

Perhaps the most commonly used Security Sensor is Falco by SysDig, which leverages kernel modules or eBPF to monitor all activity on a host. It is fully extensible and allows users to identify when anomalous activity occurs in a container environment – such as opening a shell in a container or when a container reads/writes to a sensitive folder on the host like /etc or /proc. AWS provides a solution that integrates Falco with AWS Security Hub

- by forwarding Falco logs to CloudWatch logs via a FluentBit DaemonSet.

- triggers a subscription filter of those log events to a Lambda function.

- which posts the events into Security Hub for centralized monitoring.

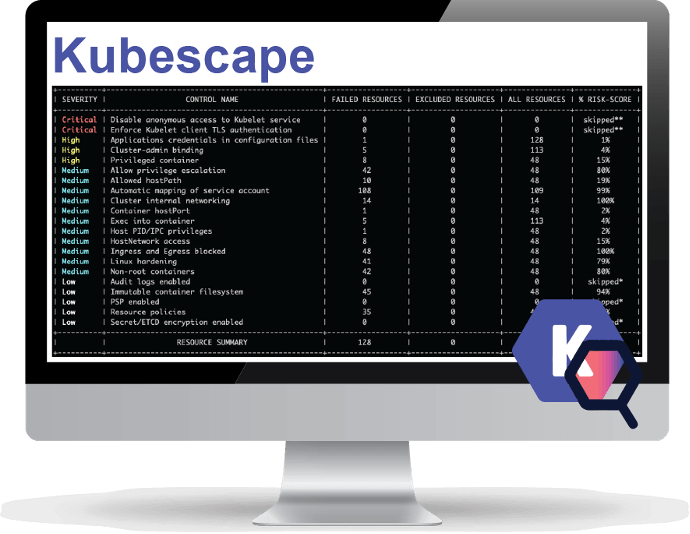

Another interesting security sensor technology is found in tools like Kubescape by ArmoSec – which query the Kubernetes API to monitor a system’s entire cluster configuration. Such tools can be compared to AWS Config, but for a Kubernetes environment.

| Example Kubescape Output: |

While not exhaustive, these examples have been provided to reflect the various types of tools available that provide security sensors for container-based systems. A 3PAO or AO should be consulted to ensure any tool selected as part of the monitoring stack meets FedRAMP requirements and is acceptable with regard to addressing system-specific risks.

Asset Management and Inventory Reporting for Deployed Containers

| Applicable Controls: | CM-8 |

Scans, architectural diagrams and the inventory make up the Holy Trinity of FedRAMP assessments. They all have to be telling the same story. This is why it is critical to be inventorying and tracking what containers are actually deployed.

Since some container orchestration solutions assign random names to containers/pods at runtime, it is important to make sure the inventory is tracking against those resource types directly. For example if using, Kubernetes, make sure the inventory tracks against Deployments, StatefulSets, Jobs, and DaemonSets – not individual pods.

Conclusion

FedRAMP is certainly not for the faint of heart. It is an enormous undertaking for any system, and the novelty of containers can further complicate the journey to compliance. Luckily, stackArmor has been at the forefront of digesting these requirements and translating them into real solutions for its customers using their ThreatAlert Security Platform and ATOM.

About stackArmor

stackArmor helps commercial, public sector and government organizations rapidly comply with FedRAMP, FISMA/RMF, DFARS and CMMC compliance requirements by providing a dedicated authorization boundary, NIST compliant security services, package development with policies, procedures and plans as well as post-ATO continuous monitoring services. Click here to get in touch with us.